NewEdge Unifier Gateway Atom¶

The Unifier gateway is compliant with the “data plane / control plane” design principle. S/P-GW is split in two components: S/P-GW-U and S/P-GW-C.

This implementation is based on CUPS architecture of 3GGP standardization and issue of partner between differents actors.

Summary¶

The Objective of the Unifier Gateway is on the one hand to merge in a single network entity a gateway for Wifi and mobile access, introducing a flexible point to manage IP traffic for multi type of access (mobile, WiFi). This approach leads to the decentralization of mobile packet core entities. On the other hand, the UGW is implemented by means of virtualization techniques. The various vnfcs of the UGW are thus instantiated on virtual machines created on the execution platform.

The components belong to the control plane:

- MME Mobility Management (GTP-C entry-point) S/P-GW-C GTP-C end-point

- DHCP server DHCP server for LTE and Wi-Fi accesses

- SPGW-C & SDN Controller based on opendaylight : manage the S/P-GW-U control (GTP-U tunnel – SDN flow interworking)

- Authentication, Authorization and Accounting (AAA) : Authenticate, Authorize and Account the DHCP allocation strategy

- Home Subscriber Server (HSS) : contains the customers credentials

Some components belong to the data plane: Dapataplane components * X-GW-U (based on openvswitch) : * Router-nat : * DHCP-relay : Access network * Access point : * eNodeB :

User documentation¶

Refer to the documentation available on the path: doc/src/docs/asciidoc and b-com/README.md

Files Directory¶

this projects follow ...

1 2 3 4 5 6 7 8 9 10 11 12 13 14 | ├── ~ ├── ansible # contains the playbook ansible ├── dataplane # contains files to deploy dataplane components of WEF ├── tools # contains differents scripts (shell or python) to configure WEF deployment ├── network-ugw # contains shell scripts to manage the network of WEF deployment │ ├── add_network.sh # create network on openstack provider │ ├── remove_network.sh # remove network on openstack provider │ └── install-rules.sh ├── Makefile ├── nodes.yml # define different node of UGW ├── config.yml # define credentials to connect to openstack provider ├── network.yml # define credentials to connect to openstack provider ├── README.md └── Vagrantfile # |

Configuration file for Vagrantfile¶

- The

node.ymlfiles contains the list of components description. A model component model is defined as following :

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 | - name: <name> ## params to virtualbox environnement box: ubuntu/xenial64 mem: 512 cpus: 1 nics: #Internal only interfaces - ip: network_name: MGNT method: dhcp auto_config: "True" type: private_network - ip: network_name: SGi method: dhcp auto_config: "True" type: private_network ## params to openstack environnement OS_FLOATING_IP_POOL: <network_external> OS_FLAVOR : m1.small OS_IMAGE : xenial ## Define list all network OS_NETWORKS : - MGNT - SGi |

- The

config.ymlfile contains the general configuration of cloud credentials:

1 2 3 4 5 6 | openstack_auth_url: <URL_KEYSTONE_OPENSTACK> username: <userid> tenant_name: <tenant_id> key_file: <keyfile> key_name: <keyname> login: <login> |

- The

network.ymlfile contains the network configuration:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 | - cidr: <cidr > #192.168.97.0/24 description: gateway: <ip gateway> name: <name network in cloud infra> name_oai: <oai network network for playbook ansible> subnets: - allocation_pools: - end: <start ip> #192.168.97.200 start: <end ip> # 192.168.97.100 cidr: <subnet > 192.168.97.0/24 gateway_ip: 192.168.97.1 name: <name subnet in cloud infra> type: <private,vxlan, vlan, gre> # only private, vlan support vlan_tag: <tag> # tag for vlan (otpionnal) vni_tag: <tag> # tag for vxlan (otpionnal) |

Environment deployment¶

Deployment Workflow¶

Requirements¶

- Ansible (>2.4.3) and related dependencies ()

- vagrant (> 1.9.3)

- Plugins

- vagrant-openstack-provider (0.12.0) :

- vagrant-hostmanager: manages the

/etc/hostsfile on guest machines (and optionally the host) - vagrant-dns: allows you to configure a dns-server managing a development subdomain

- [vagrant-cachier] (https://github.com/fgrehm/vagrant-cachier): caches package managers files

Note : install the vagrant plugin

shell $ vagrant plugin install vagrant-openstack-provider ...

- python 3

- VirtualBox

Deployment on Virtualbox¶

TO BE COMPLETED 1. Create network and deploy VNF automaticcally

1 | vagrant up --provider=virtualbox

|

Deployment on Openstack¶

1.Create network on cloud. We must begin to define different subnet in add_network.sh

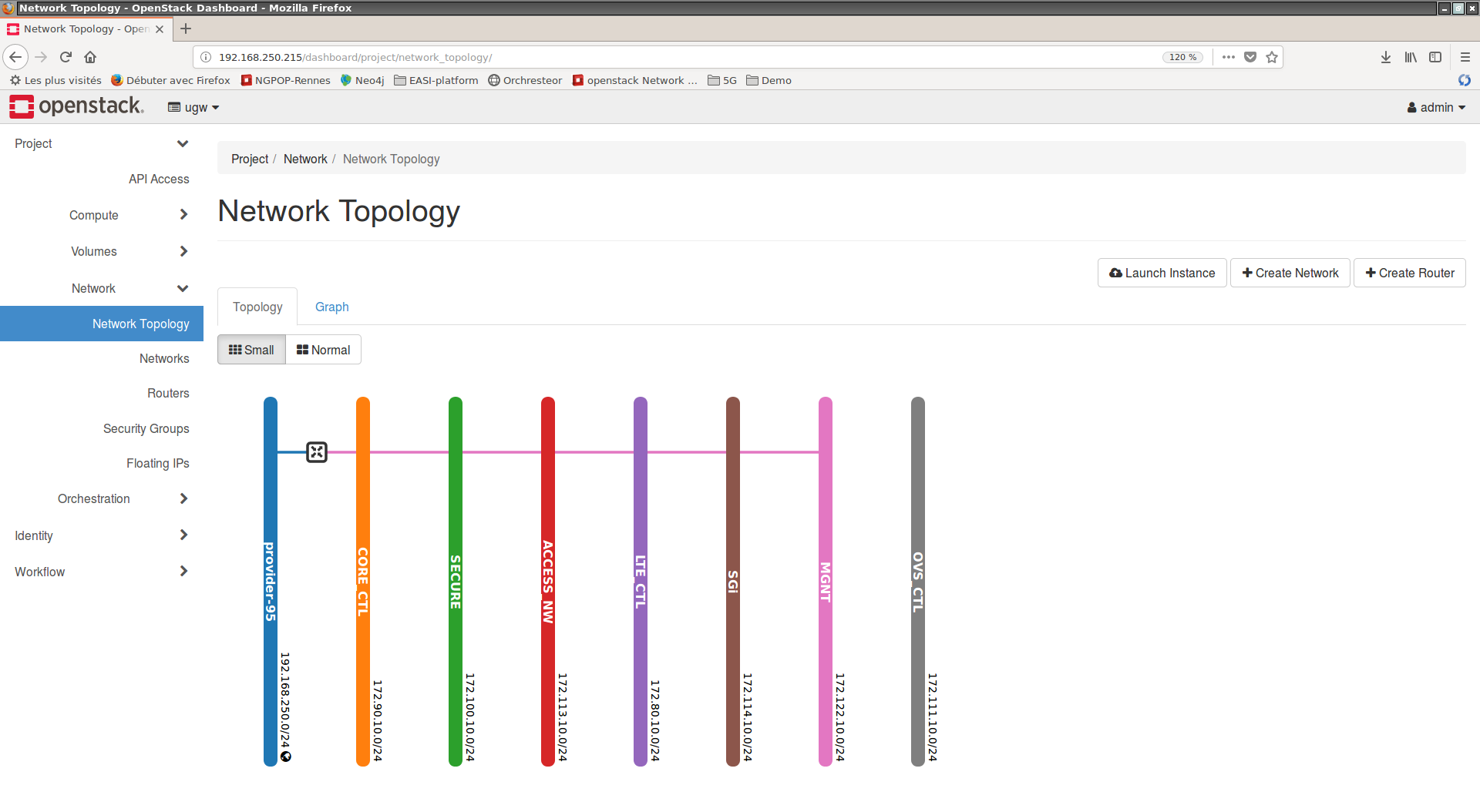

| network | Descritpion | subnet | start (default | end (default) | gateway (default) |

|---|---|---|---|---|---|

| LTE_CTL | 172.80.10.0/24 | 172.80.10.100 | 172.80.10.200 | 172.80.10.1 | |

| CORE_CTL | 172.90.10.0/24 | 172.90.10.100 | 172.90.10.200 | 172.90.10.1 | |

| SECURE | 172.100.10.0/24 | 172.100.10.100 | 172.100.10.200 | 172.100.10.1 | |

| OVS_CTL | 172.111.10.0/24 | 172.111.10.100 | 172.111.10.200 | 172.111.10.1 | |

| MGNT | 172.112.10.0/24 | 172.112.10.100 | 172.112.10.200 | 172.112.10.1 | |

| ACCESS_NW | 172.113.10.0/24 | 172.113.10.100 | 172.113.10.200 | 172.113.10.1 | |

| SGi | 172.114.10.0/24 | 172.114.10.100 | 172.114.10.200 | 172.114.10.1 |

1 2 3 4 | $ cd network-ugw $ add_networks.sh ... $ cd ... |

- results :

deploy VNF¶

1 2 | $ vagrant up --provider=openstack

....

|

Generate the configration files to plaubook ansible¶

1 2 3 4 5 6 7 8 | $ cd tools $ virtualenv venv $ . venv/vin/activate $ pip install requirements.txt ... $ ./generate-config-files.sh ... $ cd .. |

Provision VNF from ansible playbook¶

1 2 3 4 5 | $ vagrant provision ... $ cd ansible && ansible-playbook -i ../.vagrant/provisioners/ansible/inventory/vagrant_ansible_inventory --extra-vars "@group_vars/conf_vm_release_wef_r1.yml" ./ugw-install.yml ... $ |

- results :

destroy VNF¶

1 2 | $ vagrant destroy ... |

Deploy Dataplane of WEF¶

Deploy on openstack¶

- create internal network for the dataplane components

it exits three config files:

config.yml: define the cloud credentialsnodes.yml: define the dataplane componentsnetworks.yml: define the network of dataplane components

1 2 3 | $ cd dataplane

$ ./add_network

...

|

- Deploy the dataplane components

1 2 3 4 5 | $ cd dataplane $ vagrant up --provider=openstack --no-provision $ vagrant provision $ cd ../ansible && ansible-playbook -i ../dataplane/.vagrant/provisioners/ansible/inventory/vagrant_ansible_inventory --extra-vars "@group_vars/conf_vm_release_wef_r1.yml" ./ugw-install.yml ... |

Deploy on virtualbox¶

TO BE COMPLETED

Task Lists¶

- [ ] Write description